MdpAIR | Daytona Beach Drone Company

MdpAir provides aerial photography and video in Daytona Beach. We’re a drone company that provides several options for drone / UAS / UAV options. We cover Port Orange, New Smyrna, and Ormond Beach areas. No matter the service, Real estate photography, mapping, and other aerial services are our specialty. Small scale or large scale we can assist with roofing inspections, property diagrams, 3d mapping, aerial photography, search and rescue and much more. Whether you’re a private entity or government such as city, county or local municipality we can provide fast response and assistance with any job from the air. All of our pilots are FAA Rated, certified and maintain their experience through added training or continued education.

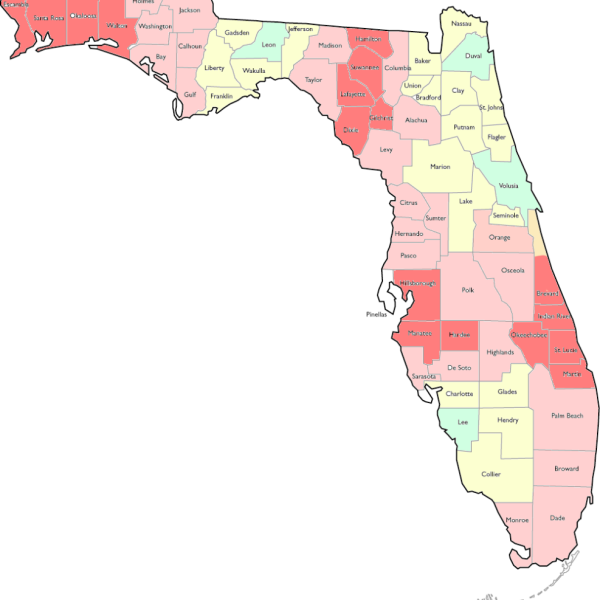

Service Areas

Where the sky is not the limit but just the beginning! As a certified FAA Part 107 drone pilot, I am thrilled to offer exceptional aerial photography and videography services across Central Florida and beyond. At MdpAIR, every flight is an opportunity to create something extraordinary. Whether you’re in Central Florida or need me to come to you, I am here to elevate your project with stunning aerial imagery.

Why Choose MdpAIR?

- FAA Part 107 Certified: Safety and compliance are my top priorities. Being FAA Part 107 certified means I operate with the highest standards of safety and professionalism.

- Central Florida Expertise: With an in-depth knowledge of Central Florida’s diverse landscapes and urban settings, MdpAIR is ideally positioned to capture the best aerial views this vibrant area has to offer.

- Ready for Travel: Your project’s location is no barrier. MdpAIR is equipped and prepared to travel, bringing high-quality aerial imaging services to your doorstep, wherever that may be.

MdpAIR is the best source for your stratigic solution

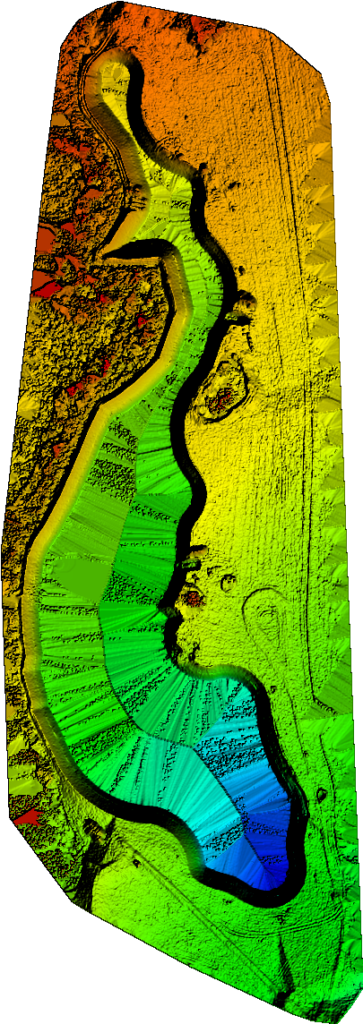

In the rapidly evolving world of construction, staying ahead of the curve is not just an advantage; it’s a necessity. That’s where our drone service, specializing in mapping and aerial imagery, comes into play, offering an innovative solution for construction projects of all sizes.

Unparalleled Perspective from Above

The heart of our service lies in providing a bird’s-eye view of construction sites. This vantage point is invaluable for project managers, architects, and engineers, who can now access detailed, high-resolution images and videos that traditional methods can’t match. Our drones capture every angle of a construction site, ensuring no detail is overlooked.

Where the sky is not the limit but just the beginning! As a certified FAA Part 107 drone pilot, I am thrilled to offer exceptional aerial photography and videography services across Central Florida and beyond. At MdpAIR, every flight is an opportunity to create something extraordinary. Whether you’re in Central Florida or need me to come to you, I am here to elevate your project with stunning aerial imagery.

Why Choose MdpAIR?

- FAA Part 107 Certified: Safety and compliance are my top priorities. Being FAA Part 107 certified means I operate with the highest standards of safety and professionalism.

- Central Florida Expertise: With an in-depth knowledge of Central Florida’s diverse landscapes and urban settings, MdpAIR is ideally positioned to capture the best aerial views this vibrant area has to offer.

- Ready for Travel: Your project’s location is no barrier. MdpAIR is equipped and prepared to travel, bringing high-quality aerial imaging services to your doorstep, wherever that may be.

Mapping

Project Maps

We Provide site mapping for your project

Inspections

Jobsite Inspections

Same Day Aerial Views

Lessons

Learn to Fly

Drone flight classes

Ready to Elevate Your Project with Aerial Footage?

Get in touch with us today to explore how our drone mapping and aerial imagery services can revolutionize your construction processes. Our team is eager to demonstrate the impact of our advanced technology on your project’s efficiency, safety, and decision-making. Contact us – HELLO@mdpAIR.com or 386-316-0917

Projects